TPS and CTPS: how do they affect your B2B telemarketing campaigns?

Content

- Scraping Twitter Data Using Beautifulsoup

- Capturing Data Using Python

- Where To Get Twitter Data For Academic Research

- How To Scrape Twitter For Historical Tweet Data

- Does Anyone Know If Twitter Has Any Legal Term Document Or Policy That Rules The Use Of Twitter Data For Research Purposes?

- Running A Brief Analysis Of Accounts

Massive USA B2B Database of All Industrieshttps://t.co/VsDI7X9hI1 pic.twitter.com/6isrgsxzyV

— Creative Bear Tech (@CreativeBearTec) June 16, 2020

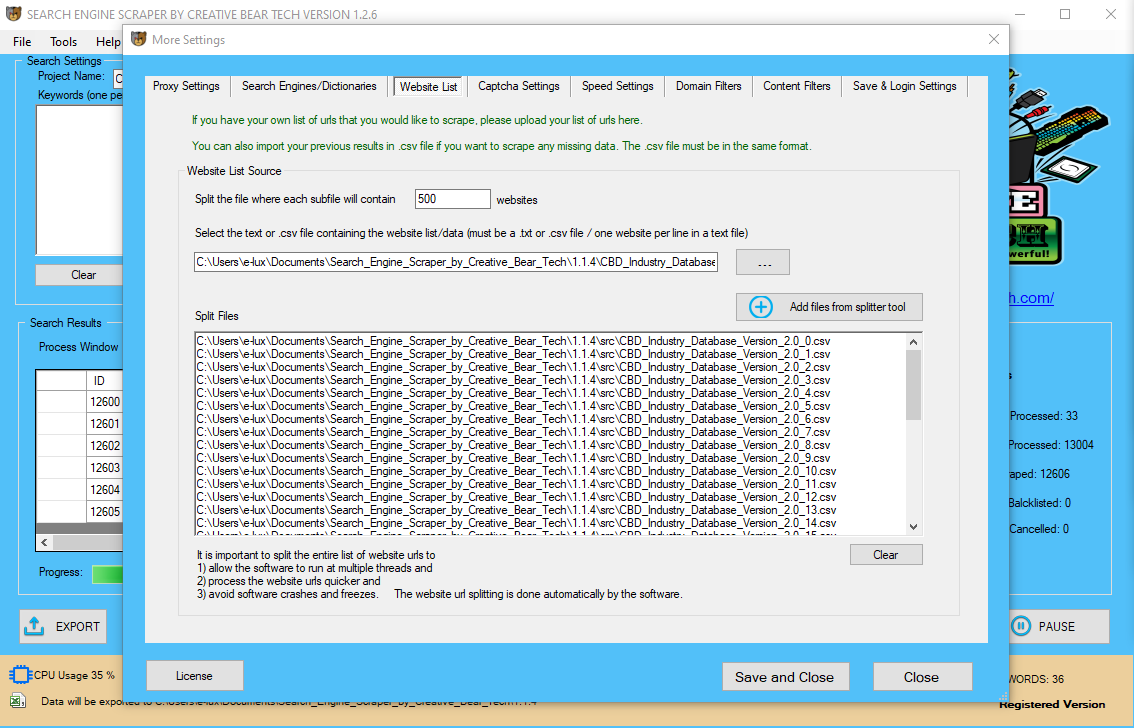

Then click on New Project and enter the URL to scrape. The Twitter profile will now be totally rendered in ParseHub and it is possible for you to to start out extracting info to scrape. For our example today, we will be scraping our personal Twitter profile @ParseHub for every tweet on our timeline. However, Twitter works with an infinite scroll to load extra tweets. Once the location is rendered, we'll first click on the username within the first tweet within the timeline. To make sure all tweets are chosen, we may even click on the username of the second tweet on the timeline. Once the URLs are entered, Excel will begin pulling within the information.

Scraping Twitter Data Using Beautifulsoup

Pet Stores Email Address List & Direct Mailing Databasehttps://t.co/mBOUFkDTbE

— Creative Bear Tech (@CreativeBearTec) June 16, 2020

Our Pet Care Industry Email List is ideal for all forms of B2B marketing, including telesales, email and newsletters, social media campaigns and direct mail. pic.twitter.com/hIrQCQEX0b

In the case of scraping data from Twitter, the URLs in question must be the URLs where the information is publicly displayed, specifically, Twitter profile pages. If my support tickets are anything to go by, a lot of people need to be able to fetch data about Twitter accounts like their number of tweets or followers. But, then, I also assume that the things we share within the public area can be utilized without asking permission. I actually have tried to scrape twitter information utilizing BeautifulSoup and requests library. The service supplier could have an association with Twitter that can present them with entry to the “firehose” of all tweets to construct this collection. Crimson Hexagon provides this kind of knowledge acquisition. Value-added companies for the Twitter data, such as coding, classification, evaluation, or data enhancement. If you are not utilizing your own instruments for analysis, these worth-added services may be extraordinarily helpful on your research (or they might be utilized in mixture with your own instruments). Using the PLUS(+) sign on this conditional, add a select command and choose the part on the website that incorporates all the tweets on the timeline. Now ParseHub is setup to extract info about each tweet on the web page. I won’t go into the small print about how or why it really works, it might greater than probably be pretty boring! In the end, you should have working formulation to repeat and paste into Excel. For the aim of this post and dashboard, I am going to strictly look at importing knowledge from particular person Twitter profiles. In order to tug in data, you will need an inventory of Twitter URLs that you really want the info for. While we are not exactly traveling by way of time here, Excel needs one thing that will allow us to tug external data in. To make this happen, we have to set up Niels Bosma’s SEO Tools plugin. to discuss the event of a customized Twitter scraper to get the Twitter data you want.

Capturing Data Using Python

Like buying knowledge instantly from Twitter, the cost will depend on elements such because the number of tweets and the size of the time period. I need to download random tweets from Twitter for particular time interval (of two years ). Crawling for bots is the equal of a human visiting an internet web page. For instance, bots that power enrichment instruments like Clearbit and Hunter crawl and scrape information. I tried to log in first utilizing BeautifulSoup and then scrape the required web page. Hopefully this information has provided sufficient of a description of the landscape for Twitter data that you can transfer ahead with your research. This clearly comes with the limitations described beforehand with the public Twitter APIs, however will be less costly than the opposite Twitter knowledge choices. When contemplating purchasing tweets, you need to be conscious that it's not more likely to be a trivial amount of cash.

Where To Get Twitter Data For Academic Research

The previous two sections focussed on the place to find possible inauthentic networks, the data you should create a small network, and how one can scrape information from Twitter. A stronger technique to automate the capturing of knowledge from Twitter, and the visualisation of a community is with the software Gephi, using the Twitter API.  I even have tried utilizing statuses/pattern API, however couldn't specify the time interval. Twitter service suppliers generally provide dependable access to the APIs, with redundancy and backfill. Selenium can open the web-browser and scroll right down to bottom of net web page to allow you to scrape. In current days the tweets additionally contain pictures and movies. Perhaps, loading them within the internet-browser may be sluggish. Therefore, if you're planning to scrape thousands of tweets, then it could devour lots of time and involves intensive processes. The Twitter Followers Scraper will be enough to scrape twitter messages with keyword or other specifications. In order to entry and obtain knowledge from Twitter API, you should have credentials corresponding to keys and access tokens.You get them by simply creating an APP with Twitter. After gathering an inventory of celebrities, I wanted to find them on Twitter and save their handles. Twitter’s API supplies a simple way to query for customers and returns results in a JSON format which makes it straightforward to parse in a Python script. One wrinkle when coping with celebrities is that fake accounts use comparable or equivalent names and could be troublesome to detect. Luckily, Twitter features a helpful information subject in each user object that indicates whether or not the account is verified, which I checked before saving the deal with. For instance, we share the datasets we now have collected at GW Libraries with members of the GW analysis neighborhood (however when sharing outdoors the GW group, we solely share the tweet ids). However, only a small variety of establishments proactively collect Twitter information – your library is an effective place to inquire. Twitter’s Developer Policy (which you conform to whenever you get keys for the Twitter API) locations limits on the sharing of datasets. If you might be sharing datasets of tweets, you'll be able to solely publicly share the ids of the tweets, not the tweets themselves. Another celebration that wishes to use the dataset has to retrieve the complete tweet from the Twitter API based on the tweet id (“hydrating”).

I even have tried utilizing statuses/pattern API, however couldn't specify the time interval. Twitter service suppliers generally provide dependable access to the APIs, with redundancy and backfill. Selenium can open the web-browser and scroll right down to bottom of net web page to allow you to scrape. In current days the tweets additionally contain pictures and movies. Perhaps, loading them within the internet-browser may be sluggish. Therefore, if you're planning to scrape thousands of tweets, then it could devour lots of time and involves intensive processes. The Twitter Followers Scraper will be enough to scrape twitter messages with keyword or other specifications. In order to entry and obtain knowledge from Twitter API, you should have credentials corresponding to keys and access tokens.You get them by simply creating an APP with Twitter. After gathering an inventory of celebrities, I wanted to find them on Twitter and save their handles. Twitter’s API supplies a simple way to query for customers and returns results in a JSON format which makes it straightforward to parse in a Python script. One wrinkle when coping with celebrities is that fake accounts use comparable or equivalent names and could be troublesome to detect. Luckily, Twitter features a helpful information subject in each user object that indicates whether or not the account is verified, which I checked before saving the deal with. For instance, we share the datasets we now have collected at GW Libraries with members of the GW analysis neighborhood (however when sharing outdoors the GW group, we solely share the tweet ids). However, only a small variety of establishments proactively collect Twitter information – your library is an effective place to inquire. Twitter’s Developer Policy (which you conform to whenever you get keys for the Twitter API) locations limits on the sharing of datasets. If you might be sharing datasets of tweets, you'll be able to solely publicly share the ids of the tweets, not the tweets themselves. Another celebration that wishes to use the dataset has to retrieve the complete tweet from the Twitter API based on the tweet id (“hydrating”).

How To Scrape Twitter For Historical Tweet Data

Just check out @akiko_lawson, a Japanese account with over 50 million tweets. ParseHub will automatically pull the username and profile URL of every tweet. In this case, we'll take away the URL by expanding the choice and eradicating this extract command. So first, boot up ParseHub and seize the URL of the profile you’d wish to scrape. There are two ways to scrape Instagram with Octoparse. You can construct a scraping task using Advanced Mode or use our pre-constructed template for Instagram. The template helps you fetch information very quickly while building a recent task provides the flexibleness to extract any information needed from the net page. Since the web optimization Tools plugin is now installed, we can make the most of a sure operate referred to as “XPathOnURL”. This, like the flux capacitor, is what makes importing Twitter information to Excel possible. This listing is essential in constructing audiences for twitter ads or as strategies to get more followers. The WebScraper is a useful gizmo for scraping historical knowledge from twitter. By using the best filters, you possibly can scrape advanced search data from Twitter. Such information could be quite valuable for market evaluation. Selenium is likely one of the widespread and efficient options to scrape knowledge from twitter with infinite scroll. It additionally gave me a fantastic excuse to experiment with the tools obtainable within the open source community for web scraping and mining Twitter information, which you can read about below. After clicking on the data format possibility, a file will soon be downloaded with all of the scraped Twitter data. These scrapers are pre-built and cloud-based mostly, you need not fear about choosing the fields to be scraped nor download any software program. The scraper and the data can be accessed from any browser at any time and may deliver the information on to Dropbox. data from social media feeds could be useful in conducting sentiments evaluation and understanding consumer habits in the direction of a selected occasion, product, or assertion.

- DiscoverText lets you acquire information from the public Twitter Search API; purchase historic tweets by way of the Twitter knowledge access software, Sifter; or upload other kinds of textual data.

- The notable exception is DiscoverText, which is focused totally on supporting educational researchers.

- Despite what the gross sales consultant could let you know, most Twitter service providers’ offerings concentrate on advertising and business intelligence, not tutorial analysis.

- Sifter supplies free price estimates and has a lower entry price point ($32.50) than purchasing from Twitter.

Today, we'll go over tips on how to scrape tweets from a Twitter timeline to export all of them into a easy spreadsheet with all the data you’d need. Not-so-surprisingly, you possibly can learn so much about anybody by going via their twitter timeline. And so, it can be fairly useful to scrape all tweets from a particular person. The steps under will assist you to arrange your twitter account to have the ability to entry live stream tweets. In this tutorial, we are going to introduce tips on how to use Python to scrape live tweets from Twitter. This implies that you'll not miss tweets because of network issues or other points that may occur when utilizing a software to access the APIs your self. Note, additionally, that some service providers can provide data from different social media platforms, corresponding to Facebook. Another choice for acquiring an present Twitter dataset is TweetSets, a web utility that I’ve developed. Any tweets which have been deleted or turn into protected will not be obtainable. One method to overcome the limitations of Twitter’s public API for retrieving historical tweets is to discover a dataset that has already been collected and satisfies your analysis requirements. Nonetheless, this is prone to be as complete a dataset as it's attainable to get. You can retrieve the last 3,200 tweets from a consumer timeline and search the final 7-9 days of tweets. Subsequently, I will also use the info I pulled via Twitter’s API to show the visualisation and analysis.

ash your Hands and Stay Safe during Coronavirus (COVID-19) Pandemic - JustCBD https://t.co/XgTq2H2ag3 @JustCbd pic.twitter.com/4l99HPbq5y

— Creative Bear Tech (@CreativeBearTec) April 27, 2020

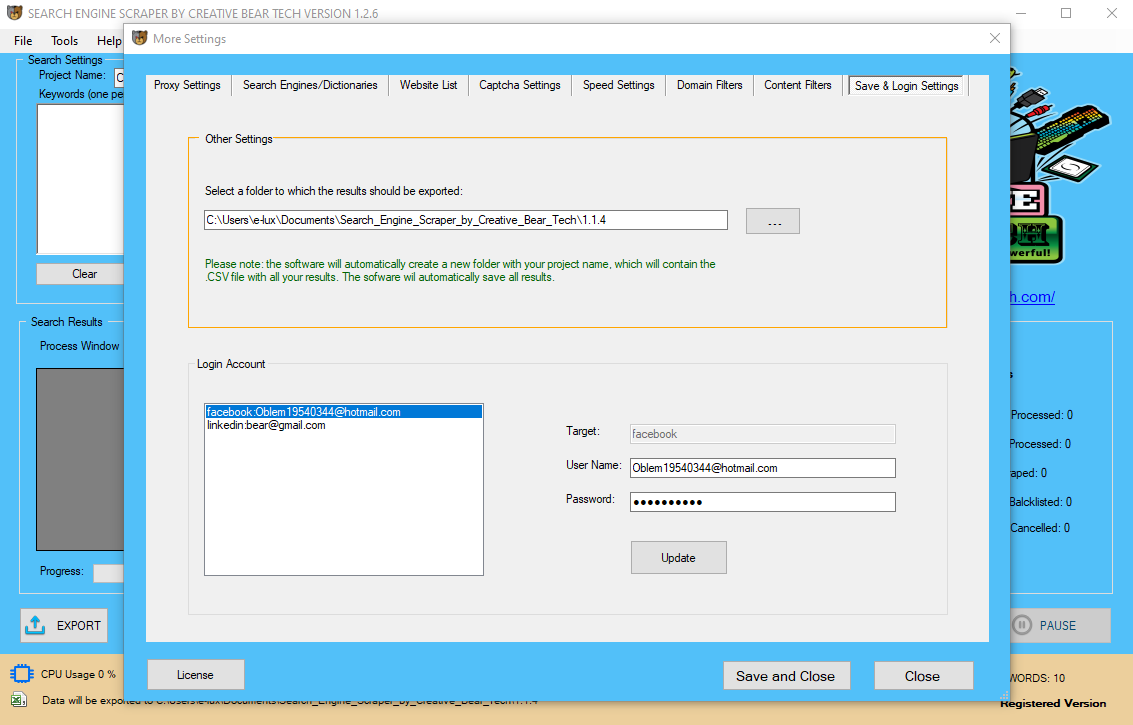

Reviewing your Twitter information can provide you insights into the type of information stored for your account. It offers a simple method for you to view details about your account, and to make changes as you see match. Twitter API — A Python wrapper for performing API requests similar to searching for users and downloading tweets. This library handles the entire OAuth and API queries for you and offers it to you in a easy Python interface. Be positive to create a Twitter App and get your OAuth keys — you will need them to get access to Twitter’s API. Data from the enterprise Twitter APIs, which have access to all historical tweets. TweetSets permits you to create your personal dataset by querying and limiting an present dataset. For example, you'll be able to create a dataset that solely accommodates original tweets with the time period “trump” from the Women’s March dataset. If you might be local, TweetSets will permit you to obtain the complete tweet; otherwise, just the tweet ids can be downloaded. Currently, TweetSets contains nearly a half billion tweets. There can be numerous causes to mine twitter information such as on your project, marketing and others. But collecting the required information in a structured format can be efficient, which can be carried out with the assistance of Twitter Scraping Software. i was dealing with same downside and used API however couldn't find any answer to become old data. So i'm utilizing code to get twitter knowledge on actual time for future use. For example, Ellen DeGeneres has tweeted over 20k times. And that's still pretty low when in comparison with some of the most prolific Twitter accounts out there. As a result, you may need to restrict the variety of tweets you scrape from a particular user. To do that, we'll give ParseHub a restrict of occasions it will scroll down and load extra tweets.  Depending on the variety of URLs you're getting information for, it'd take a while for Excel to get the information. I wouldn't suggest pasting in lots B2B Lead Generation Software Tool of of URLs at once. Next, we need to add the formulas wanted to be able to pull within the Twitter data to Excel. Search engine bots crawl pages to get the content to go looking and generate the snippet previews you see beneath the link. At the end of the day, all bots ought to hear as to whether or not an online page should be crawled. Also, enter twitter username you wish to obtain tweets from.In this instance, we are going to scrape Donald Trump twitter web page. The full choice can also be very useful for particular person accounts. It is a network using all Twitter activity, so tweets, tags, URLs and pictures. This data is very useful if you're attempting to investigate sure Twitter users. Once the movie star name was associated with a Twitter deal with, the subsequent step was to again use Twitter’s API to download the consumer’s tweets and save them right into a database. It’s not an earth-shattering project, however it is a enjoyable way for Twitter users to see who they tweet like and maybe uncover a number of interesting issues about themselves in the course of. First, when considering a Twitter service provider, you will need to know whether or not you are able to export your dataset from the service supplier’s platform. (All should permit you to export reports or evaluation.) For most platforms, export is restricted to 50,000 tweets per day. If you need the uncooked information to carry out your own evaluation or for data sharing, this may be an necessary consideration. Datasets built by querying towards an present set of historic tweets. Despite what the sales representative might inform you, most Twitter service providers’ choices concentrate on marketing and enterprise intelligence, not tutorial analysis. The notable exception is DiscoverText, which is concentrated totally on supporting tutorial researchers. DiscoverText allows you to purchase data from the general public Twitter Search API; buy historical tweets via the Twitter knowledge entry device, Sifter; or upload other forms of textual knowledge. Sifter offers free cost estimates and has a decrease entry value point ($32.50) than purchasing from Twitter. Within the DiscoverText platform, tweets could be searched, filtered, de-duplicated, coded, and categorized (using machine learning), together with a bunch of other functionality. Connecting them are the connections, (referred to in a community as edges). That means Twitter account @a tweeted and talked about @b,@c,@d and @e. Before we get into the small print of exactly tips on how to capture information from Twitter for community visualisations and evaluation, we first must establish what we require to make a network visualisation.

Depending on the variety of URLs you're getting information for, it'd take a while for Excel to get the information. I wouldn't suggest pasting in lots B2B Lead Generation Software Tool of of URLs at once. Next, we need to add the formulas wanted to be able to pull within the Twitter data to Excel. Search engine bots crawl pages to get the content to go looking and generate the snippet previews you see beneath the link. At the end of the day, all bots ought to hear as to whether or not an online page should be crawled. Also, enter twitter username you wish to obtain tweets from.In this instance, we are going to scrape Donald Trump twitter web page. The full choice can also be very useful for particular person accounts. It is a network using all Twitter activity, so tweets, tags, URLs and pictures. This data is very useful if you're attempting to investigate sure Twitter users. Once the movie star name was associated with a Twitter deal with, the subsequent step was to again use Twitter’s API to download the consumer’s tweets and save them right into a database. It’s not an earth-shattering project, however it is a enjoyable way for Twitter users to see who they tweet like and maybe uncover a number of interesting issues about themselves in the course of. First, when considering a Twitter service provider, you will need to know whether or not you are able to export your dataset from the service supplier’s platform. (All should permit you to export reports or evaluation.) For most platforms, export is restricted to 50,000 tweets per day. If you need the uncooked information to carry out your own evaluation or for data sharing, this may be an necessary consideration. Datasets built by querying towards an present set of historic tweets. Despite what the sales representative might inform you, most Twitter service providers’ choices concentrate on marketing and enterprise intelligence, not tutorial analysis. The notable exception is DiscoverText, which is concentrated totally on supporting tutorial researchers. DiscoverText allows you to purchase data from the general public Twitter Search API; buy historical tweets via the Twitter knowledge entry device, Sifter; or upload other forms of textual knowledge. Sifter offers free cost estimates and has a decrease entry value point ($32.50) than purchasing from Twitter. Within the DiscoverText platform, tweets could be searched, filtered, de-duplicated, coded, and categorized (using machine learning), together with a bunch of other functionality. Connecting them are the connections, (referred to in a community as edges). That means Twitter account @a tweeted and talked about @b,@c,@d and @e. Before we get into the small print of exactly tips on how to capture information from Twitter for community visualisations and evaluation, we first must establish what we require to make a network visualisation.  However, you'll be able to improve this rely is by authenticating tweets as an software as an alternative of person. This can increase price limit to 450 Requests and cut back the time consumed. So far I’ve just shown you the way to scrape a single element from a page. Where that becomes powerful is if you load in 20,000 Twitter profile URLs, supplying you with 20,000 items of knowledge instead of 1. Fortunately (because of the subject of this post), Twitter profile pages are also properly structured, meaning we will use the Custom Scraper to extract the data we want. Key for academics are options for measuring inter-coder reliability and adjudicating annotator disagreements. Some of these instruments are targeted on retrieving tweets from the API, while others may even do analysis of the Twitter data. For a more complete list, see the Social Media Research Toolkit from the Social Media Lab at Ted Rogers School of Management, Ryerson University. This tutorial demonstrates tips on how to scrape tweets for data analysis utilizing Python and the Twitter API. You can scrape data inside any specified dates, however, the twitter website makes use of infinite scroll, which will show 20 tweets at a time. There are numbers of tools out there to mine or scrape data from Twitter. Twint is a complicated Twitter scraping software written in Python that enables for scraping Tweets from Twitter. You even have the option to schedule the info if you wish to scrape twitter data on a timely basis. Visit thetwitter software pageand log in along with your twitter account to generate a series of access codes that let you to scrape information from twitter. The Search API can despatched one hundred eighty requests in 15 min timeframe and gets you maximum one hundred tweets per Request. The price is determined by both the length of the time period and the variety of tweets; usually, the cost is driven by the size of the time interval, so shorter periods are more inexpensive. The cost could also be feasible for some analysis initiatives, particularly if the cost may be written right into a grant. Further, I am not conversant in the conditions placed on the uses / sharing of the bought dataset. For example, right here at GW Libraries we now have proactively built collections on numerous topics including Congress, the federal authorities, and news organizations. If you wouldn't have a Twitter account, you can also go to twitter.com and click the Settings link at the backside of the web page. From there you can access your Personalization and Data settings in addition to your Twitter information. With the best infrastructure, you can scrape twitter for key phrases or based mostly on a timeframe. This tutorial exhibits you scrape historical information from Twitter’s superior search for free using the Twitter Crawler obtainable on ScrapeHero Cloud. The PhantomBuster Twitter API is a good knowledge scraping device for extracting the profiles of key followers.

However, you'll be able to improve this rely is by authenticating tweets as an software as an alternative of person. This can increase price limit to 450 Requests and cut back the time consumed. So far I’ve just shown you the way to scrape a single element from a page. Where that becomes powerful is if you load in 20,000 Twitter profile URLs, supplying you with 20,000 items of knowledge instead of 1. Fortunately (because of the subject of this post), Twitter profile pages are also properly structured, meaning we will use the Custom Scraper to extract the data we want. Key for academics are options for measuring inter-coder reliability and adjudicating annotator disagreements. Some of these instruments are targeted on retrieving tweets from the API, while others may even do analysis of the Twitter data. For a more complete list, see the Social Media Research Toolkit from the Social Media Lab at Ted Rogers School of Management, Ryerson University. This tutorial demonstrates tips on how to scrape tweets for data analysis utilizing Python and the Twitter API. You can scrape data inside any specified dates, however, the twitter website makes use of infinite scroll, which will show 20 tweets at a time. There are numbers of tools out there to mine or scrape data from Twitter. Twint is a complicated Twitter scraping software written in Python that enables for scraping Tweets from Twitter. You even have the option to schedule the info if you wish to scrape twitter data on a timely basis. Visit thetwitter software pageand log in along with your twitter account to generate a series of access codes that let you to scrape information from twitter. The Search API can despatched one hundred eighty requests in 15 min timeframe and gets you maximum one hundred tweets per Request. The price is determined by both the length of the time period and the variety of tweets; usually, the cost is driven by the size of the time interval, so shorter periods are more inexpensive. The cost could also be feasible for some analysis initiatives, particularly if the cost may be written right into a grant. Further, I am not conversant in the conditions placed on the uses / sharing of the bought dataset. For example, right here at GW Libraries we now have proactively built collections on numerous topics including Congress, the federal authorities, and news organizations. If you wouldn't have a Twitter account, you can also go to twitter.com and click the Settings link at the backside of the web page. From there you can access your Personalization and Data settings in addition to your Twitter information. With the best infrastructure, you can scrape twitter for key phrases or based mostly on a timeframe. This tutorial exhibits you scrape historical information from Twitter’s superior search for free using the Twitter Crawler obtainable on ScrapeHero Cloud. The PhantomBuster Twitter API is a good knowledge scraping device for extracting the profiles of key followers.